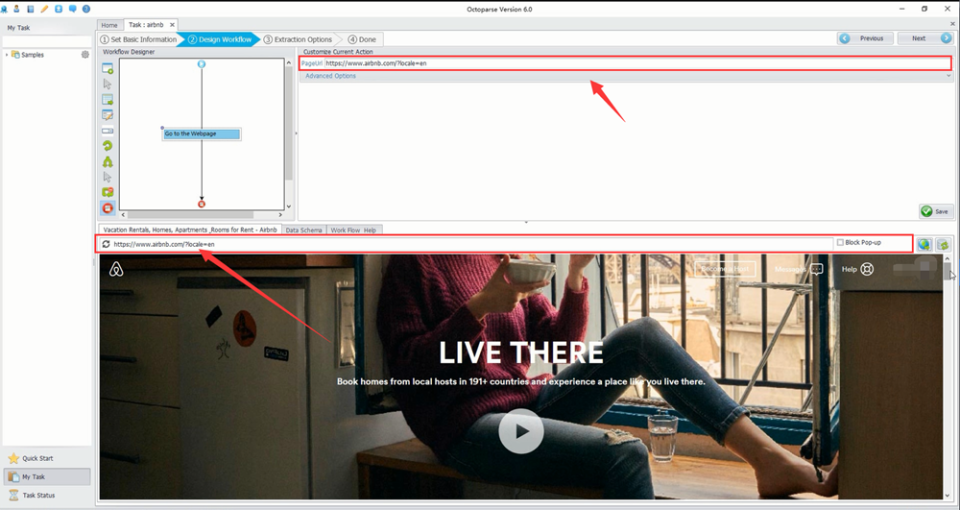

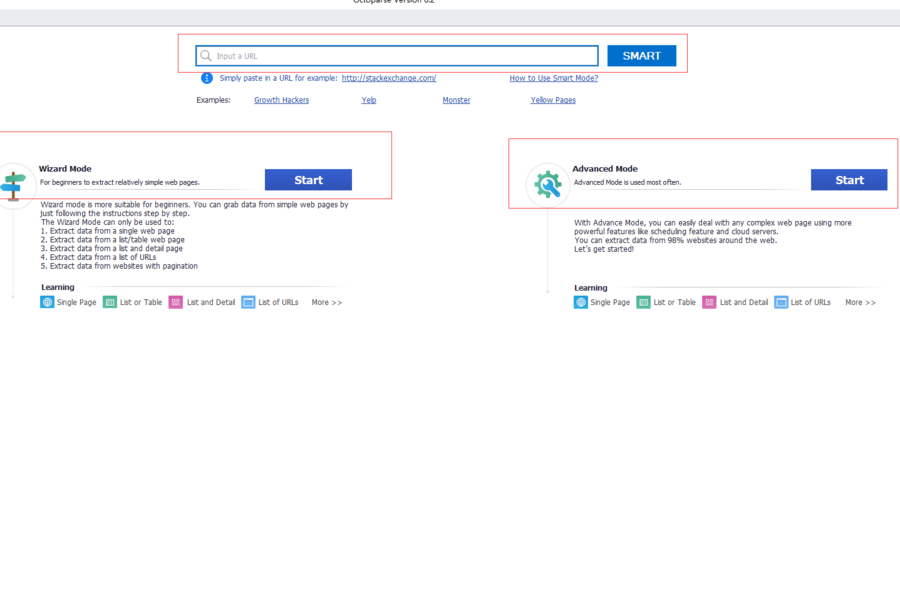

Data is scraped and stored in the cloud and accessible from any machine.Īutomatic IP Rotation – Octoparse Cloud service is supported by hundreds of cloud servers, each with a unique IP address. Capture anything from webpages like text, link, image URL, or html code.Ĭloud service (Paid editions) – Octoparse’s Cloud platform allows for 6 to 14 times faster data extraction, running the extraction task 24/7. No coding is required for most websites.ĭeal with dynamic and static websites – Octoparse allows to easily scrape behind a login, fill in forms, input search terms, click through infinite scroll, switch drop downs. Simply point and click web elements, and Octoparse will identify all the data in a pattern and extracts any web data automatically. Point-and-Click Interface – Octoparse applies machine learning algorithms to accurately locate the data at the moment you click on it. The following are the key features of Octoparse. Signup and Log in with the Octoparse account for free (Free plan offered is with unlimited pages to scrape and unlimited storage).Download the installer and unzip the downloaded file.There are various export formats of your choice like CSV, Excel formats, HTML, TXT, and database (MySQL, SQL Server, and Oracle).

Octoparse’s cloud service (available only in paid editions) is useful for fetching large amounts of data to meet large-scale extraction needs. You can run your extraction project either on your own local machine (Local Extraction) or in the cloud (Cloud Extraction). To make data extraction easier, Octoparse features filling out forms, entering a search term into the text box, etc. The software simulates human actions to interact with web pages. Octoparse is a Windows application and is designed to harvest data from both static and dynamic websites. Below is the list of items that we are going to cover in this post We managed to do that with Octoparse without any coding at all. We’ll extract meta-data about the posts published on this blog. In this post, we will talk about Octoparse and different extraction rules which we configured to scrape our blog. Octoparse has many built-in tools and APIs to crawl and re-format the extracted data using a user-friendly point & click UI. Octoparse can scrape any data visible on a webpage. Using Octoparse, you can develop extraction patterns and define extraction rules which would tell Octoparse which website is to be opened, how to locate the data you plan to scrape and what kind of data you want etc. We recently came across a automated web crawler called Octoparse. This can help us find what we are looking for in a matter of seconds but the data is not structured and hence can’t be used for analysis. They go from link to link and bring data about those webpages back to Google’s servers. Crawlers, like Google’s, look at webpages and follow links on those pages. There are various ways to acquire data from websites of your preference. We used Octoparse to scrape data from a list of URLs, without any coding at all.ĭata is valuable and it’s not always easy to get the correct data from the web sources because all websites have different templates and designs. So, let's review the best tools available on the market.Did you know you can scrape data from webpages without writing a single line of code? In this post, we will talk about a tool called Octoparse. With three types of data extraction tools – batch processing, open-source, and cloud-based tools – you can create a cycle of web scraping and data analysis. Modern data extraction tools are the top robust no-code/low code solutions to support business processes.

The only problem is that this method can be used for extracting tables only. With web scraping, you can easily get information saved in an excel sheet. This method may surprise you, but Microsoft Excel software can be a useful tool for data manipulation. Similar services may be a good option if there is a budget for data extraction. Nevertheless, Python is the top choice because of its simplicity and availability of libraries for developing a web scraper.ĭata service is a professional web service providing research and data extraction according to business requirements. It is possible to quickly build software with any general-purpose programming language like Java, JavaScript, PHP, C, C#, and so on. There are several ways of manual web scraping. If the company has in-house developers, it is possible to build a web scraping pipeline. Manually extracting data from a website (copy/pasting information to a spreadsheet) is time-consuming and difficult when dealing with big data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed